What it is — Cameras (fixed, trolley/robot-mounted or handheld) + computer-vision models that detect product facings, out-of-stocks, misplacements, price-tag visibility, and shelf share.

Why it matters for fixtures — gives automated, frequent audits of whether products are front-faced, correctly placed and priced — directly tied to merchandising quality of gondolas and endcaps.

Typical hardware & software — small IP cameras, PoE, on-prem edge boxes (NVIDIA Jetson / Intel NUC) or cloud inference; models for object detection + OCR for price labels.

Example uses & vendors — automated store audit apps, planogram-check assistants and mobile store-audit tools. Vision AI is now a mainstream tool for planogram compliance and shelf insights.

Implementation note — start with a single aisle pilot: mount 2–3 cameras, collect 2–4 weeks of images, train/tune models for your SKUs and shelf types.

What it is — UHF RFID tags on items + fixed under-shelf/readpoint readers; AI/ML filters noise, resolves collisions and maps reads to precise shelf zones.

Why it matters for fixtures — transforms a static gondola into a near-real-time inventory zone (fast stock counts, automated replenishment alerts, faster click-and-collect fulfilment).

Why AI is needed — basic readers produce noisy, overlapping reads; ML improves tag-to-slot mapping, reduces false negatives and predicts missing facings. RFID combined with AI is increasingly being adopted to make shelf inventory reliable at scale.

Implementation note — combine multiple antennas, calibrate per fixture (metal shelves need special tuning), and run ML models that learn read patterns per bay to improve accuracy.

What it is — mobile robots that traverse aisles, use cameras/RFID to scan shelves and feed data into analytics systems.

Why it matters — robots create continuous audit cycles without adding headcount; they pair naturally with fixed fixtures and under-shelf hardware to provide holistic store visibility. Robots scanning aisles for inventory and insights are production deployments today.

Implementation note — robots are ideal after you’ve proved out camera or RFID pilots; they scale auditing frequency and feed data into ML models for trend detection.

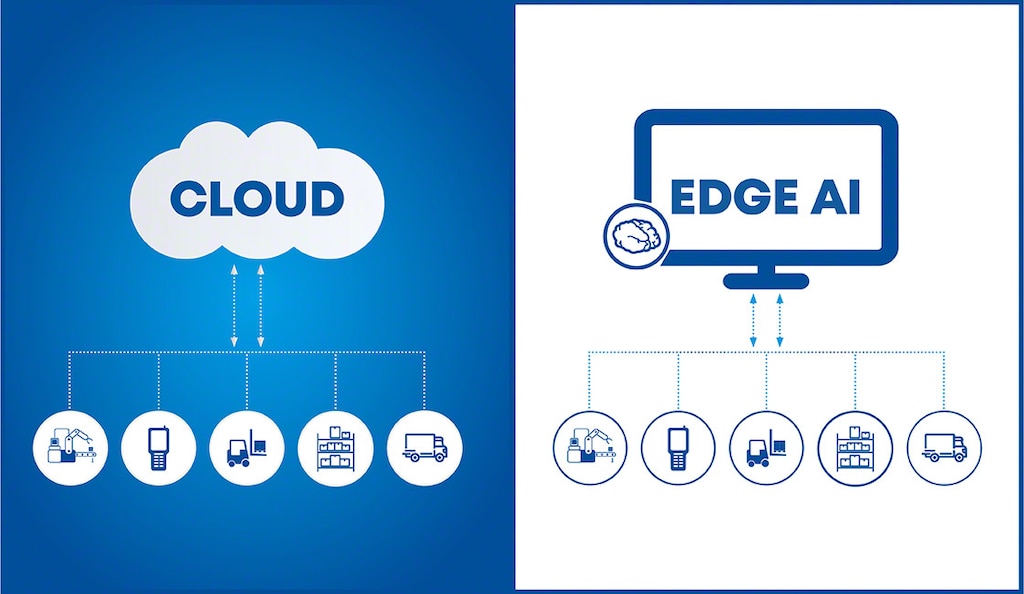

What it is — running inference on small devices mounted close to fixtures (edge devices) so insights are formed locally (people counting, theft detection, ESL updates) without heavy cloud latency.

Why it matters — reduces bandwidth, preserves privacy (images processed locally), and enables real-time responses (e.g., rerouting staff to refill a bay).

Implementation note — use Jetson-class devices or efficient ML runtimes (TensorRT, ONNX Runtime) and keep only aggregates to the cloud.

What it is — generative/ML models schedule and adapt on-shelf or endcap screens based on time-of-day, inventory, promotions and observed footfall.

Why it matters for fixtures — digital endcaps and shelf-top displays can show dynamic offers tied to real-time inventory (e.g., promote slow-moving SKUs on that bay).

Example — content engines that change creatives based on local weather, inventory or shopper demographics. AI-powered digital signage has clear case studies of uplift in engagement.

Implementation note — start with one digital endcap and a simple rule+ML model; measure uplift vs static advert.

What it is — ESLs automatically updated from the POS/ERP. Newer research and pilots explore RF energy harvesting (battery-less) or extremely low-power displays.

Why it matters — integrates pricing, promotion and product info directly into the fixture; batteryless ESLs reduce maintenance cost and environmental footprint. Commercial trials and research show momentum toward low/battery-less ESLs.

Implementation note — evaluate on high-value aisles first; ESL ROI depends on price-change frequency and labor saved.

What it is — models predict when a fixture/bay will go out of stock based on sales patterns, shelf-level reads and seasonality.

Why it matters — turns shelving into a proactive node: automatic pick lists, optimized staff routes and reduced OOS.

Implementation note — feed RFID/scan + POS + historical sales into a simple ML model (exponential smoothing or gradient-boosted trees) for near-term replenishment suggestions.

What it is — models that flag odd movements (rapid removals from a bay, frequent grab-and-drop behavior) by combining vision, RFID and sales data.

Why it matters — fixtures are theft hotspots; AI can alert staff to suspicious activity tied to a specific gondola or endcap.

Implementation note — combine event-based rules with ML that learns typical bay behavior to minimize false alarms.

What it is — AR apps or twin simulations that let merchandisers visualise planograms, test lighting, and simulate customer flows before physically changing fixtures.

Why it matters — speeds planogram rollout, reduces mistakes, and helps sell fixture upgrades to customers by showing ROI visually.

Implementation note — create digital twins of store bays and test LED strip placements and endcap layouts before install.

What it is — AI agents that can coordinate tasks: order ESL updates, schedule restocks, trigger promotions, and interface with store staff via voice/alerts.

Why it matters — moves store automation beyond analytics into action. Deploy carefully with governance and human-in-loop controls.